Palantir's stock price went absurdly high around February-March 2025. But why did it become so popular?

It's a company that makes software that isn't widely known -- so what makes it so effective?

How much does it cost to use their products? I honestly didn't know much about the company.

What if Palantir digitally transforms every industry in the direction they want?

If that happened, I think they'd be able to run everything in simulation, create a virtual Earth, and keep everything under control.

Whether it's Palantir or Snowflake, what these companies are building can be seen as big data-based decision-making tools.

But I also think I'd want to automate the decision-making itself. Automatically send work status requests to employees so their tasks are reflected in real-time every day, receiving interim reports on how much was done and what was accomplished. If everything is tracked like Git commits, you could distinguish between people just padding their reports and those who are actually productive.

Palantir's competitors: Snowflake, Databricks, C3.AI (each specializes in different areas)

It seems like Snowflake is more highly regarded overseas.

The content below is from notes I took while watching Palantir's learning courses. There are links at the bottom of this post, so if you're interested, feel free to visit them directly for better information!

Foundry#

Foundry is the software's name: an operational decision-making platform.

It's a tool designed to empower all job roles across various industries -- not just developers -- to create impact through data and models.

It was designed to respond to urgent requirements like national crises.

It's built so that even security-sensitive customers can use it, handling financial data, personally identifiable information, health information, unclassified information, and classified government data. It meets regulatory requirements across the board including HIPAA, GDPR, and ITAR.

Industries that need digital transformation seem to be the priority, and Palantir's products seem most needed there. Peter Thiel once said that these industries are even more important to humanity than IT companies, and I think that's related.

Palantir's software needs to be flexibly presented so users can see it, and it must adapt to conditions that change over time for organizations to use it. It shouldn't be an environment that only data specialists can use -- true digital transformation can only happen when all teams and users are brought together. Companies struggle with building data pipelines and analyzing data for big data utilization.

Typically, analysis is considered done once a dashboard is provided. And the bigger problem is that it's not clear how much real change such investments actually bring.

Collecting data, building it, analyzing it, and making decisions leads to another problem. Nothing is connected end-to-end, so results and feedback don't flow back as data. That's why companies likely saw no improvement even after building big data infrastructure. That's why Foundry provides a sustainable approach. It connects the entire organization to enable digital transformation.

What Palantir means by digital transformation seems to include employees' behaviors and office work too.

Ontology#

Ontology and applications that support the Ontology

The Ontology is the core of Foundry and consists of multiple layers.

Layer 1 is the Semantic Layer: Connects all types of data sources within an organization into a shared digital representation. It's a collection of various nouns that make up the organization. For manufacturing or retail, these would be store locations, customers, transactions, logistics centers, and various assets, entities, and events that form the work environment.

There are relationships between these objects and events, and ways they interact. There are also dynamic properties driven by the semantic layer model. The semantic layer is a living, breathing representation and model.

Layer 2 is the Kinetic Layer: The moving organization. All actions, functions, and processes of the organization -- the verbs. It actually activates the Ontology.

It performs actions like: customer completes a transaction -> product arrives at logistics center -> shipped to store.

All objects, events, and actions are modeled in a shared way. Based on the organization's common language, it becomes the cornerstone for advanced decision-making, collaboration, and application development.

Layer 3 is the Dynamic Layer: Simulation, optimization, and process automation. It approaches an organization similarly to programming code. You can explore various scenarios, branch out, and see how scenarios affect downstream operations. At the point of decision-making, you can update these scenarios to strengthen the seamless connection between strategy and operations.

Usage starts with a single workflow and expands the Ontology. The Ontology begins with data integration. Using Foundry's various pipeline applications, you can integrate diverse data sources -- tabular data (databases), sensor data, transaction data, geospatial sources, third-party data (tools like Tableau), etc. -- with the Ontology.

When these data sources reach the Ontology, they're transformed into intuitive concepts related to real-world entities like customers, products, prices, warehouses, etc.

To build the Ontology, you need to think about structured and unstructured data. You also need to think about how to connect this data to the abstract world organized around your organization (the world integrated through Palantir Foundry).

It's not just about putting data in -- it operates based on models that work with specific data. To build models, you can use model building tools, stored procedures, third-party tools, open source, and more. Data scientists are typically the experts here.

These models are built based on small fragments of the past. They weren't created to be fully integrated into our organization. Even if you build a model, creating impactful output from it is even harder. And departments are siloed, so they can't properly leverage models.

Since people struggle to use models well, these models need to be integrated into shared systems like the Ontology to be utilized with any real value.

Because Foundry is an operational decision-making platform, detailed representation of each data point is needed to enhance numerous types of analytical capabilities. Data analysts need to compare how price changes (for example) actually impacted things against predictions. In Foundry, you can use dynamic properties of objects for this. Properties are based on models and provided data.

In this kind of analysis, app developers build operational apps that alert decision-makers to anomalies requiring attention (these are likely internal-use apps). In the example, an automatic price change could significantly suppress demand for a product. In such cases, what the model recognized may not fully explain the situation. That's when the subject matter expert needs to become the decision-maker and evaluate the situation. After this evaluation, it needs to flow back from the app to the Ontology. This is called write back. That's how the system gradually becomes more intelligent, gaining lots of information from end users -- prediction updates, actions, strategy updates, and more.

Using the Ontology, you can execute all operations across the underlying systems in a stable and controlled manner. You can make sophisticated decisions and provide decision-makers with the ability to run various "what-if" scenarios. By linking analysis with operational decision-making, it ensures every decision is as well-informed as possible.

The Ontology is accessible to framework builders, development teams, and even external partners across the entire enterprise.

It's provided through OPI (=API). So it can be used not only in Foundry's frontend but also in third-party apps.

The Ontology creates a common vocabulary for all participants in the organization, integrating disparate data sources, models, and systems, and enabling collaboration and dependent workflows.

Wouldn't big data change how work is done and even enable collaboration? If you look at data creation and data quality, you could probably tell who's doing well and who's not.

I think if people's duties are tied to the company's overall goals, it could help them understand how their work connects to and serves those goals.

Example: The mindset of a cafeteria worker

A: "I provide lunch for employees."

B: "Providing meat dishes boosted the company's revenue."

If you learn a meaning like B, your approach to decision-making changes.

When a company tries to solve a problem, issues also arise at various points -- work methods, management, operations, etc. -- during the solving process. If these aren't properly resolved, or are solved incorrectly, or left unresolved, wouldn't the company collapse before ever reaching the problem they set out to solve? Of course, revenue and profit can be more important.

Am I being provided with all the information I need to make decisions to solve the problem?

— Ted Mabrey

Improving decision-making doesn't come from standardized tasks -- it comes from real-time data flowing from my decisions that changes how we work.

When a problem occurs, you need to find the root cause, and that process isn't easy. But if you've adopted Foundry, the relationships are connected, so it would be relatively easier. And the Ontology can make it easier to solve problems. These improvements enable better decision-making.

Ontology Visualization#

The first bucket of the Ontology system is data.

Foundry has apps designed to connect, transform, integrate, and manage data.

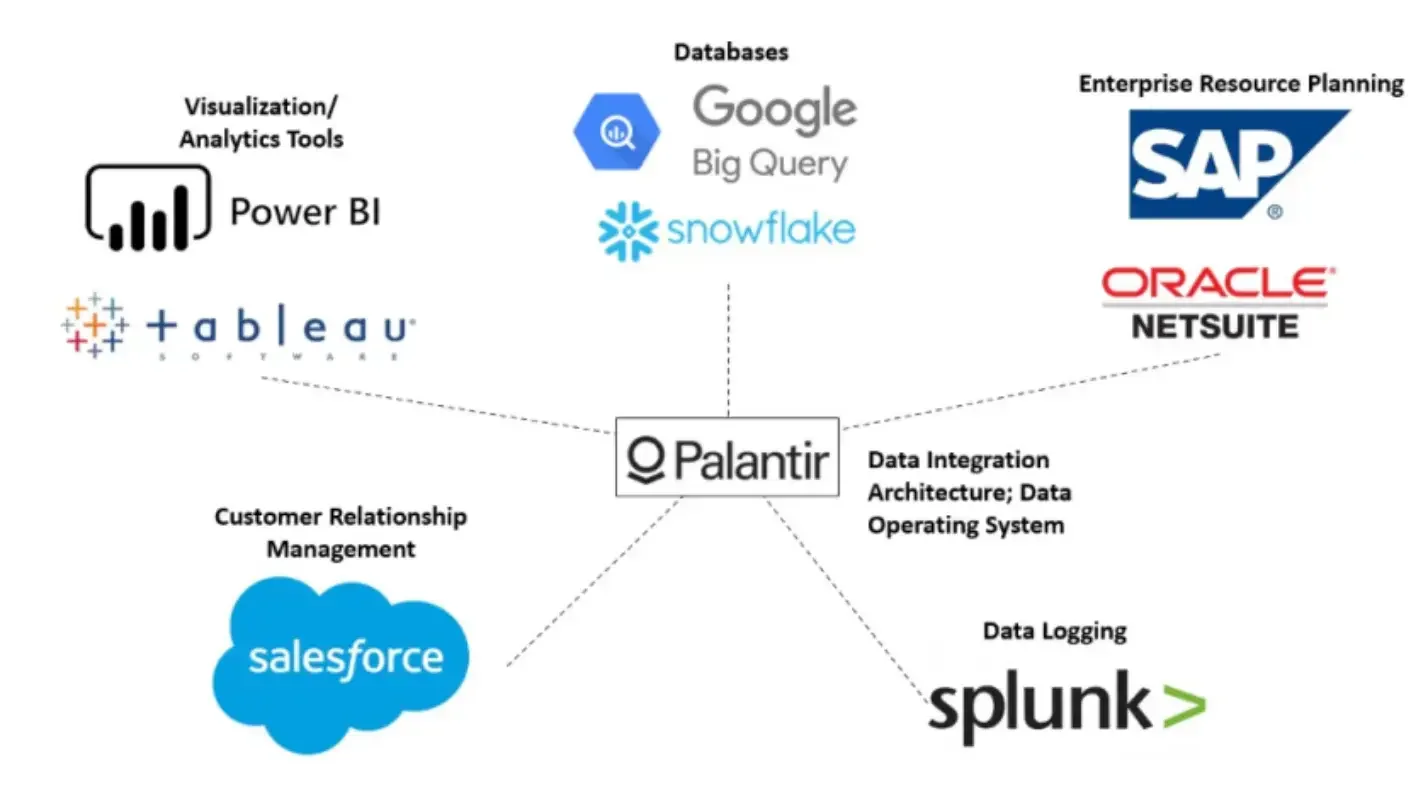

You can connect structured, unstructured, tabular, IoT, geospatial, and commercial data sources (SAP, Salesforce, Oracle, etc.) to Foundry.

The reason for this is that building big data means first gathering all kinds of data in one place. Then you need to connect, transform, and integrate it to make decisions. This process differs for every company, and it's daunting for people who don't know the right approach. Building big data without a plan for how to use it is like saying "We should have a team dinner" but not deciding what to eat before the dinner. You need priorities among clear goals.

What Is a Model?#

A model is like a cooking recipe.

A model is a recipe learned from data -- a special function that understands data.

Example: Spam email filter model

- Data (ingredients): Content, subjects, sender information from countless spam and legitimate emails

- Model (recipe): Rules and patterns that distinguish spam from legitimate emails (e.g., if certain words appear frequently, it's spam; if certain keywords aren't in the subject, it's legitimate)

- Result (dish): Classifying whether a new email is spam or legitimate

Example: Stock price prediction model

- Data (ingredients): Historical stock prices, interest rates, economic indicators, news articles, etc.

- Model (recipe): Factors and patterns that affect stock price movements (e.g., when interest rates rise, stock prices tend to fall)

- Result (dish): Predicting a specific company's stock price for tomorrow

If you use data without a model, it's too complex and vast, so mistakes can happen. Doing it this way every time is inefficient -- with a model, results are predicted and classified automatically. Hidden patterns with subtle information that humans might miss can't be found without models.

Model training is needed to create a model.

- Training dataset: e.g., cooking ingredients, photos of completed dishes / spam email examples, legitimate email examples

- Validation dataset: Like practice test questions for mid-checks. The model predicts with the validation dataset, compares results to answers, and gradually makes corrections and improvements

- Test dataset: Like a real exam for final evaluation after model training is complete. The model makes final predictions with test data, and performance is evaluated by comparing results to actual answers

Predictions are poor at first, but by reviewing mistakes with the validation dataset and studying again with the training dataset, predictions become increasingly accurate. This process is called optimization. The goal of optimization is to adjust the recipe to predict as accurately as possible.

= Maybe if we applied this process to students, they could do better on exams?

Good teachers (data), good textbooks (datasets), consistent effort (optimization), excellent models (students from top universities capable students)

A place is needed to carry out this learning process.

-

Dataset connection: Gathering scattered data in one place. Integrating multiple data sources into one. The process of preparing data.

e.g., Like bringing cooking ingredients from the fridge, pantry, market, and other places to the kitchen and organizing them. -

AWS SageMaker or GCP Vertex AI: Cloud-based professional kitchens for AI model development. Makes it easy to build powerful AI models.

e.g., Full cooking tool set: various tools, algorithm provision, automation -

Models are "recipes" learned from data, needed to utilize data efficiently.

-

Model training is the process of "feeding" data to models and "teaching" them recipes.

-

Dataset connection is the process of bringing data "ingredients" to the "kitchen" for model training.

-

GCP Vertex AI, AWS SageMaker are "cutting-edge cloud kitchens" for model development.

Foundry and Ontology's Analytics Capabilities

The apps that support data analysis are designed to be a common workbench where all types of users can investigate and explore the organization's digital twin.

Like AWS apps, Palantir also has various internal tools. How you use and connect them is up to you.

For analysis, you use Quiver. You can compare current analysis using models developed by data scientists. Having a strong integrated data and model foundation is very important.

Users may have reached different conclusions than the model's predictions, and they can provide feedback to colleagues (e.g., data scientists) to update the model to more accurately reflect the latest analysis results. Analysis workflows can occur at various levels of technical complexity. They sometimes serve as comprehensive decision support for operational workflows, requiring deep subject expertise and long-term investigation.

Operational users need to interact with prepared analysis to make the right decisions, and they should be able to change some parameters and evaluate results.

To enable numerous analytics capabilities, effective data democratization must be possible. Various analysis tools with appropriate levels of technical complexity are needed. Foundry provides these.

An important note is that in the process of democratizing access to the Ontology, the most important thing is whether security and permissions are centrally controlled and whether incorrect data analysis or wrong model usage can be circumvented.

The Ontology is centrally configured and managed, including permissions and access control, access type definitions, states, and more. Users of the Ontology can focus on analysis and operational decision-making instead of constantly setting and resetting important boundaries for exploration.

In any case, since this data is organically connected and feedback can be reflected immediately, when users (=practitioners) CUD values through apps built for internal/external use, it's reflected right away. And they provide the ability to build such custom apps.

Beyond displaying and analyzing the Ontology's objects and elements, Foundry supports app developers in building custom apps that operational users can use in their daily work.

Resources

- Palantir Documentation

- Ontology

- Platform Security, Projects and Key Security Boundaries, Security Authorization List

- Interoperability

- (Technical) User Roles

Manuals and Support Resources

Case Studies

- Digitizing an International Commercial Bank

- PG&E + Palantir

- Empowering Airbus Workers

- UK NHS and Foundry

- Research and Foundry

- More information can be found on Palantir's Impact Studies Homepage

Never mistake motion for action.

— Ernest Hemingway